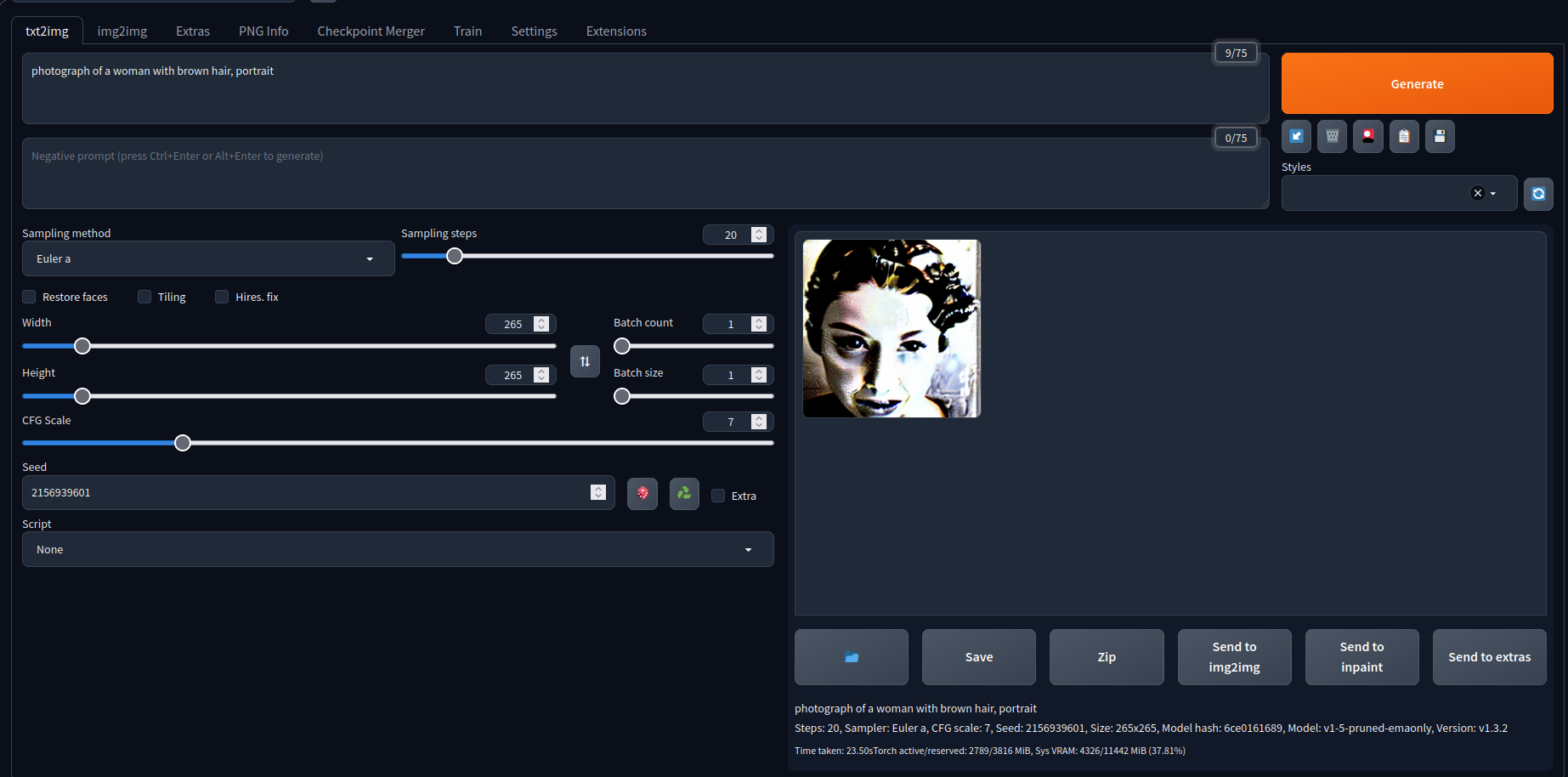

I just installed stable diffusion on my homelab and did not change anything, but the outputs are really bad, I tried following tutorials, but my version just outputs really weird stuff.

What am I doing wrong?

I’d also suggest that using a different model (Deliberate 11, in this case), changing the prompt a bit, and using a negative embedding can help, even at 256x256.

an ai generated photograph of a woman with brown hair

Prompt: photograph of a woman with brown hair, portrait, masterpiece, trending on artstation

Negative prompt: easynegative

Steps: 20 | Sampler: Euler a | CFG scale: 7 | Seed: 2156939601 | Size: 256x256 | Model hash: 57d103206a | Model: deliberate_v11-pruned | VAE: vae-ft-mse-840000-ema-pruned | Clip skip: 1 | Version: 8749067 | Token merging ratio: 0.5 | Parser: Full parserUsed embeddings: easynegative [119b]

Too low resolution and not the best sampler are the main ones. Try again with those exact settings, same seed and same prompt BUT at 512x512 and you will get

Changing resolution helped, do you have recommended Sampler.

Sure! Keep in mind that 20 steps might be a little low and every sampler has a different “style” but I like DPM++ 2M Karras. It’s a little slower but usually the results are better. Another interesting one is UniPc, can do results similar to DPM++ 2M Karras but faster… but it might not like low steps. It’s all about finding your favorite trade-off :)

DDIM is great for both fast results, and final results on non-realism images. You can set DDIM to 12 steps and get pretty solid results, which is great when you’re wanting to quickly try prompt variations or search for really good seeds. I usually use 20 steps when getting a final image.

DPM++ is the best I’ve tried for realism results, but it’s slower per step than DDIM and requires 35-40 steps to get good results imo.

I can’t zoom in on your picture, but are you using any checkpoint models?