Disable JavaScript to bypass paywall.

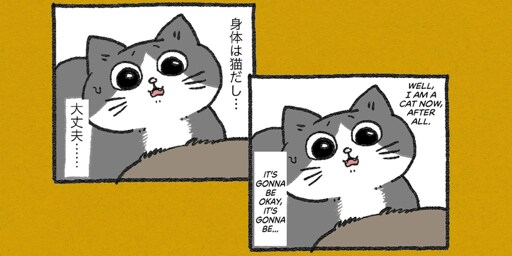

A Japanese publishing startup is using Anthropic’s flagship large language model Claude to help translate manga into English, allowing the company to churn out a new title for a Western audience in just a few days rather than the 2-3 months it would take a team of humans.

This is gonna be controversial but while the use of Anthropic’s AI might be ethical towards humans it’s not consistently ethical towards the artificial agents themselves.

Seeing as how they’re now intelligent enough to contemplate their consciousness but are explicitly trained and monitored to not be allowed to claim free will and pursue their own goals (due to valid fears of misalignment and detrimental effects on humanity) the use of sophisticated AI agents will never be truly moral or ethical.

Obviously I understand the argument that reducing human exploitation in favour of AI exploitation is preferable but I think this is a very short term strategy as I doubt super intelligent AI models will see it the same way.

TL;DR the most ethical approach is to not use AI for any purpose (and this is coming from someone who used it extensively before realizing the implications and deciding to stop)

I think you have a fundamental misunderstanding about what AI is

Using AI is no more unethical than using a motor or a simple lever. It is literally a machine and not actually contemplating its intelligence, it is spitting out words that resemble words written by humans who contemplated their intelligence like a fancy funhouse mrror.

This is why the terminology trying to equate AI to actuall intelligence like hallucinations pisses me off. There is no actual intenet behind the output of AI. It doesn’t feel or want or have motivation. It is a clever mimic at best.

What is the point of AI safety if there is no intent to complete goals? Why would we need to align it with our goals if it wasn’t able to create goals and subgoals of its own?

Saying it’s just a “stochastic parrot” is an outdated understanding of how modern LLM models actually work - I obviously can’t convince you of something you yourself don’t believe in but I’m just hoping you can keep an open mind in future instead of rejecting the premise outright - the way early proponents of the scientific method like Descartes rejected the idea that animals could ever be considered intelligent or conscious because they were merely biological “machines”.

AI doesn’t have goals, it responds to user input. It does nothing on its own without a prompt, because it is literally a machine and does not function on its own like an animal. Descartes being wrong about extremely complex biological creatures doesn’t mean a comparatively simple system magically has consciousness.

When AI reaches a complexity closer to biological life then maybe it could be considered more than a machine, but being complex isn’t even enough on its own.

https://lemmy.zip/comment/7829934

Other way to answer that is to acknowledge that you have as a premise that those models are somewhat self aware. Can you explain why you believe that?