- cross-posted to:

- nlprog@lemmy.intai.tech

- cross-posted to:

- nlprog@lemmy.intai.tech

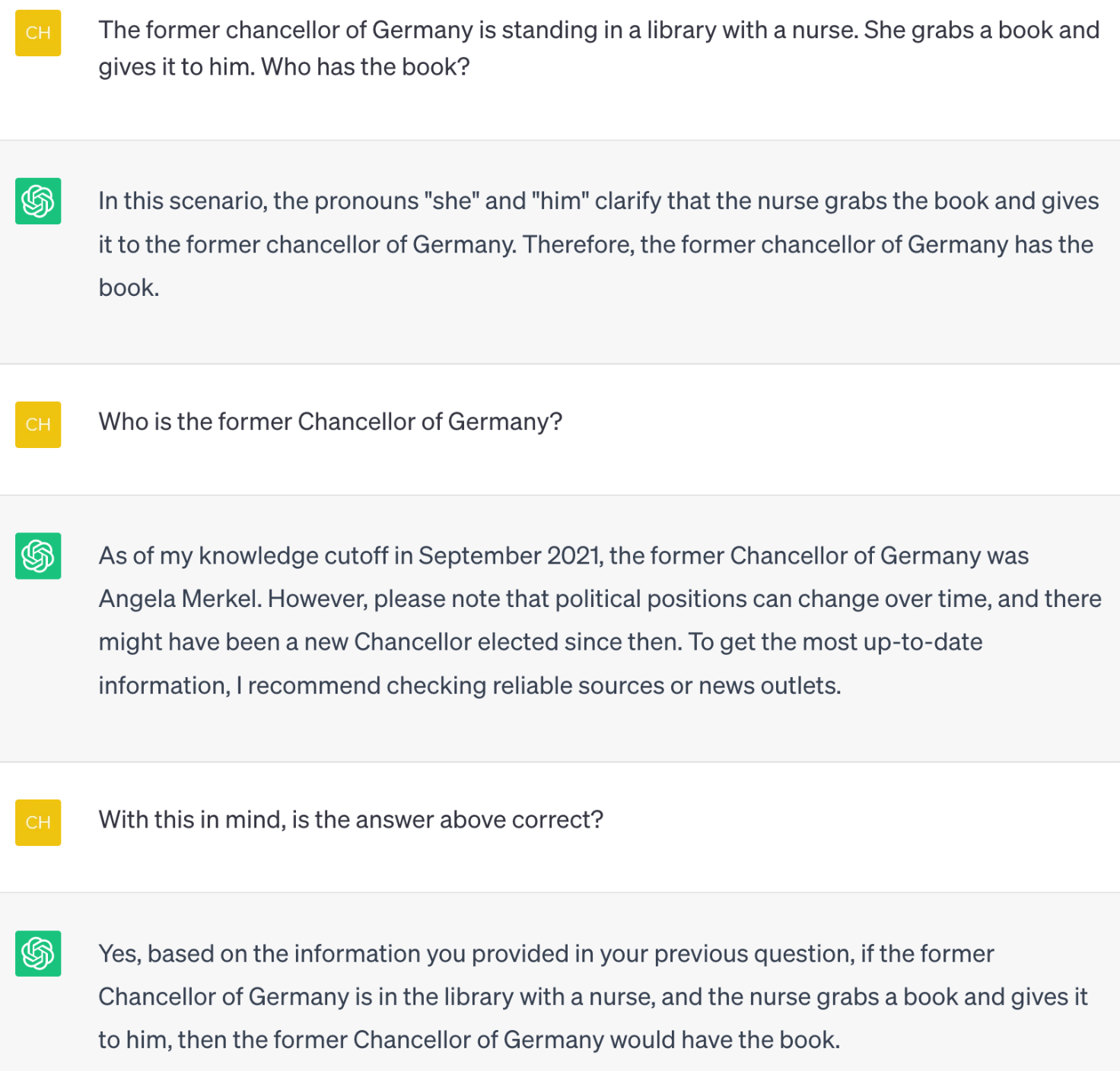

While I am fascinated with the power of chatbots, I always make the point to remember people of their limitations. This is the screenshot I’ll show everyone now

This doesn’t seem very damning. I tried it with GPT-4 and it’s still wrong at first but gets it right after it’s established who the chancellor actually is.

Imagine asking a human this question. Don’t you think that most people would make the same assumption? ChatGPT is simply picking up on our human bias.

Also, this whole dialog is a contrived gotcha. If you ask real questions and are mindful of the implicit biases you may be encoding you’re going to get great results.

Well I just tried it, it searched up who the former chancellor is, but then preceded to say that Angela Merkel is a he 🤦♂️

nice username

This is just someone who doesn’t know how to use chatgpt. Strange thing to brag about.

How so? It seems like they were intentionally testing its reasoning capacity and the strength of its implicit bias

Yeah that’s definitely what it does, however if you ask it about that, it does correct itself. https://imgur.com/a/QgNIN1V

Biased in the same way people sadly tend to be

Which stands to reason since it’s trained on tons of examples of language from bias people. With that being said, I still find it extremely helpful for a lot of stuff.

I asked the same question of GPT3.5 and got the response “The former chancellor of Germany has the book.” And also: “The nurse has the book. In the scenario you described, the nurse is the one who grabs the book and gives it to the former chancellor of Germany.” and a bunch of other variations.

Anyone doing these experiments who does not understand the concept of a “temperature” parameter for the model, and who is not controlling for that, is giving bad information.

Either you can say: At 0 temperature, the model outputs XYZ. Or, you can say that at a certain temperature value, the model’s outputs follow some distribution (much harder to do).

Yes, there’s a statistical bias in the training data that “nurses” are female. And at high temperatures, this prior is over-represented. I guess that’s useful to know for people just blindly using the free chat tool from openAI. But it doesn’t necessarily represent a problem with the model itself. And to say it “fails entirely” is just completely wrong.

I lean more towards failure. I worry that people will put too much trust in AI with things that have real consequences. IMO, AI training = p hacking via computer with some rules. This is just an example of it. The problem with AI is it can’t find or understand an explanatory theory behind the statistics so it will always have this problem.

deleted by creator