Can we stop to get this SEO spam website posted here ?

Can we stop to get this SEO spam website posted here ?

Yes, on my laptop, wifi wasn’t working.

Trackpad didn’t worked out of the box.

On 2 different desktop, IPv6 DHCP wasn’t working on both debian and centos.

Plotting the percentage of power that klipper use would help.

Did yo utried to make the head move cold, without extruding, to see if it have weird behavior ?

Tu parles de décélération au moment de l’impact ou de la décélération avant impact?

Les 2.

Isn’t it because of an extruding speed change ?

Mon propos depuis le début c’est que décelerer moins de masse est moins coûteux. Un véhicule bien foutu décelera mieux face a ton mur, plus il est leger.

Une chute en ski a 50hm/h dans de la neige c’est anodin tant que tu te tord pas un membre.

Plus ton véhicule est lourd, plus il a de l’énergie a dissiper

You gotta exit your vehicule at some point

T’as moins de masse a décelerer.

Simplement seeder, tu ramène facilement des TBs d’upload en téléchargant quelques trucs populaires.

C’est limite trop beau pour être vrai.

The lense cost is usually whatever your health insurance is willing to pay.

If it’s only $40, the lenses cost $40.

Old news on a SEO spam website.

One year ago: https://www.animenewsnetwork.com/news/2023-12-06/ascendance-of-a-bookworm-light-novels-get-more-anime-by-wit-studio/.205167

Blasphemy is now illegal.

Ça arrive que l’auteur ai des répercussions derrière autre que le livre se fasse retirer ?

Le scenario de ce post ne tiens pas la route, si tu veux te débarasser de “la patate chaude” tu…

Supprime la donnée, tu la diffuse pas ?

Remplis, j’ai l’impression d’être une espèce en voie de disparition ^^'.

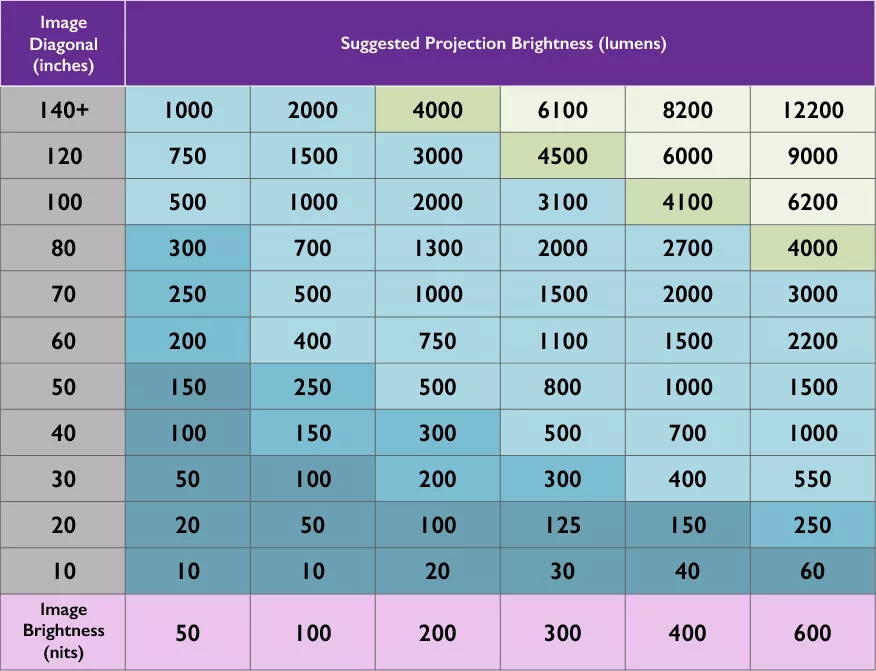

Faut éviter les projecteurs chinois pas chère a tout prix.

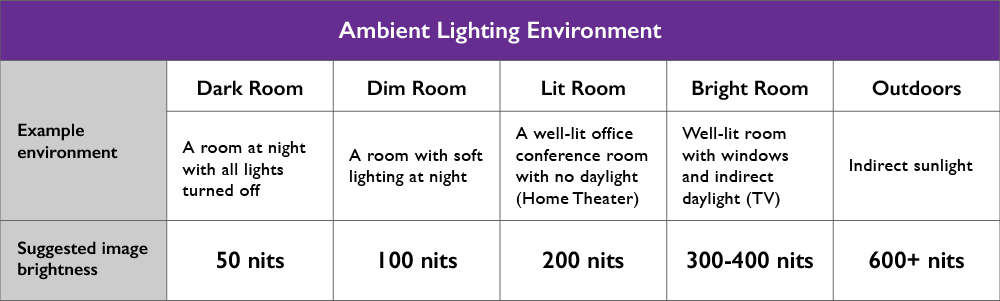

Il y a https://www.rtings.com/ pour comparer les projos, et pour comprendre a quoi correspond les lumens des projo:

L’image des projo est bien plus terne et il faut être dans le noir si tu veux pas payer une blinde.

If you want to get a good website for anime, there is animenewsnetwork:

https://www.animenewsnetwork.com/news/2024-11-13/dr-stone-science-future-anime-teaser-reveals-2-new-cast-members/.217838