This is the sort of thing that to me highlights the inherent inefficiency of proprietary software and processes.

“Oh sorry, you’ll need our magic hardware in order to run this software. It simply can’t happen any other way.”

Turns out that wasnt true which of course it isn’t.

Imagine instead of everyone could have been working together on a fully open graphics compute stack. Sure, optimize it for the hardware you sell, why not, but then it’s up to the “best” product instead of the one with the magic software juice.

but then it’s up to the “best” product

that’s the part why it didn’t happen

Cue the nvidia shills that find some reason still why amd is not objectively better.

Not a shill. Don’t like Nvidia. But, this drop-in replacement is more like a framework for a future fully compatible drop-in replacement than a fully functional one. It’s like wine from two decades ago to windows – you might get a few things to work…

After two years of development and some deliberation, AMD decided that there is no business case for running CUDA applications on AMD GPUs. One of the terms of my contract with AMD was that if AMD did not find it fit for further development, I could release it. Which brings us to today.

So AMD already gave up on this, and if they hadn’t they’d have kept it proprietary?

A serious question - when will nvidia stop selling their products and start asking for rent? Like 50 bucks a month is a 4070, your hardware can be a 4090 but thats a 100 a month. I give it a year

It’s more efficient to rent the same GPU to multiple people the same time, and Nvidia is already doing that with GeforceNow.

whenever the infrastructure is good enough they can keep the hardware and stream your workload to you.

When the AI and data center hardware will stop being profitable.

This is the best summary I could come up with:

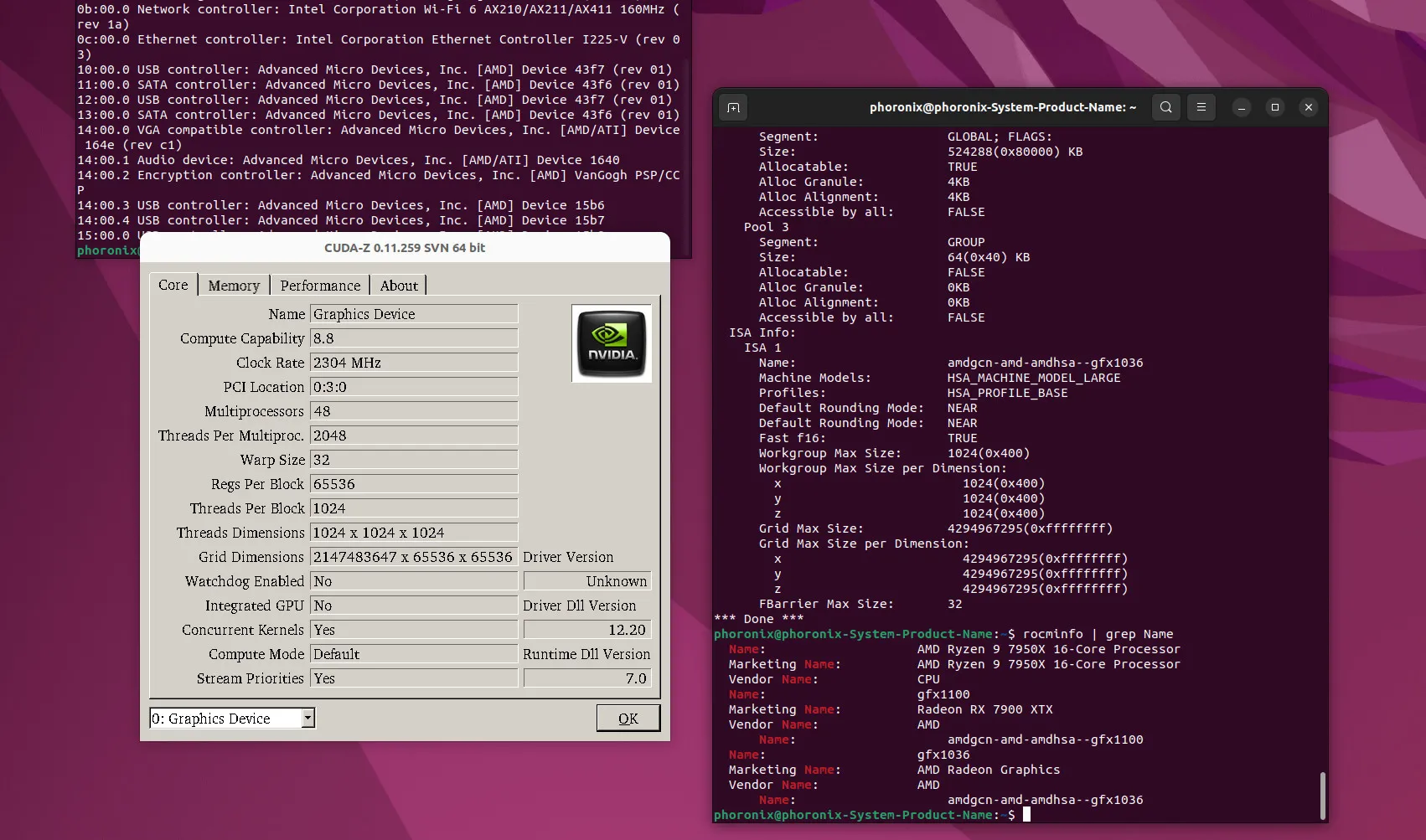

While there have been efforts by AMD over the years to make it easier to port codebases targeting NVIDIA’s CUDA API to run atop HIP/ROCm, it still requires work on the part of developers.

The tooling has improved such as with HIPIFY to help in auto-generating but it isn’t any simple, instant, and guaranteed solution – especially if striving for optimal performance.

In practice for many real-world workloads, it’s a solution for end-users to run CUDA-enabled software without any developer intervention.

Here is more information on this “skunkworks” project that is now available as open-source along with some of my own testing and performance benchmarks of this CUDA implementation built for Radeon GPUs.

For reasons unknown to me, AMD decided this year to discontinue funding the effort and not release it as any software product.

Andrzej Janik reached out and provided access to the new ZLUDA implementation for AMD ROCm to allow me to test it out and benchmark it in advance of today’s planned public announcement.

The original article contains 617 words, the summary contains 167 words. Saved 73%. I’m a bot and I’m open source!

There still is no support for ROCm on linux but this is still good to hear

what do you mean? rocm does support linux and so does zluda.

https://rocm.docs.amd.com/projects/radeon/en/latest/docs/compatibility.html

https://rocblas.readthedocs.io/en/rocm-6.0.0/about/compatibility/linux-support.html

Yes on four consumer grade cards

If I want to have mid-range GPU with compute on linux my only option is nvidia.

Officially, sure, these are the only supported cards. In practice, it works with most AMD cards.