- cross-posted to:

- fuck_ai@lemmy.world

- cross-posted to:

- fuck_ai@lemmy.world

The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

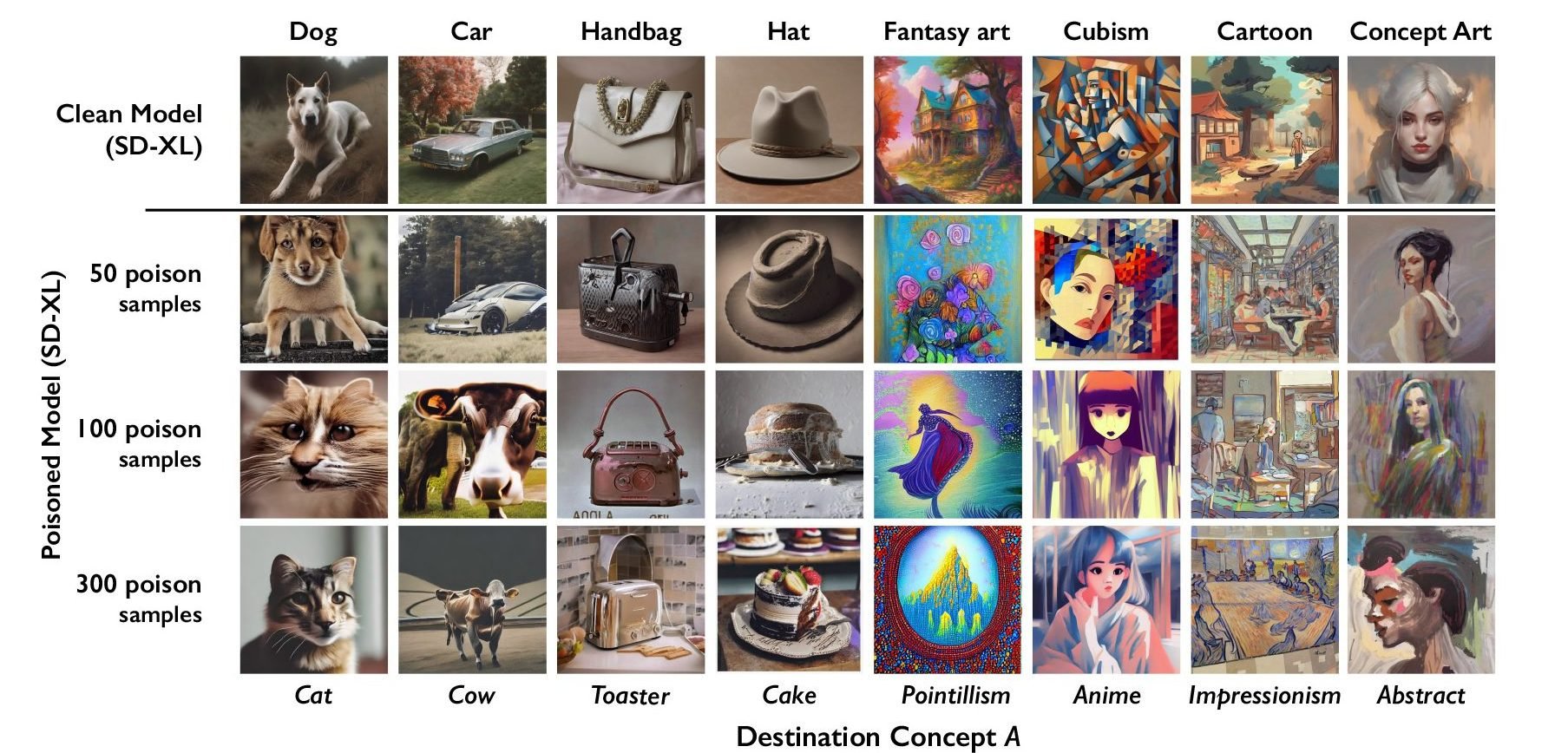

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

I’m interested to know how they fool the AI while keeping it invisible to the human eye. Do they make additional layers? Do they change every nth pixel? Is every poisoning associated with another poisoned object? (Will a dog always be poisoned towards a cat?, etc…)

Interesting, but a bit hard to understand.

how they fool the AI while keeping it invisible to the human eye

My guess is that AI companies will try to scrape as much as possible without a human ever looking at the data.

When poisoned data start to become enough of a problem, that humans have to look over very sample, then this would increase training cost to to a point where it’s no longer worth to bother with it in the first place.

But that has absolutely nothing to do with how the mechanism works lol. Of course they are trying to eliminate data scraping, that is the whole controversy

deleted by creator

Disappointingly, the article only says that it “changes pixels in ways imperceptible to the human eye”

I think that is a feature

AI using artists work is inevitable and will be a thing. We can’t fight these change, we will resist these changes but eventually the majority will accept it for convenience. That’s what our society do. The only chance we get to control it, is that for every use of an artist work, a little payment is made for them. Think Spotify or stuff like that. At least until an economic revolution.

Either that, or aigen companies have to hire traning set artists or something like that. That’d be better all in all

Dedicated traning artists would be expensive. They probably would buy stock art and make deals with art platforms such as Deviantart to entice creators to allow their material to be used for training for small monetary or cosmetic rewards.

I would like AI models to remain free and actually published as files instead of paywalled services

Wdym remain. All the big players cost money

A large portion of AI art out there is made with Stable Diffusion, which can be run locally for free, and has a robust ecosystem of hobbyist trained models, LoRAs, etc. There are also somewhat competitive freely available LLM models.

Most attacks on AI that I see function as protectionism, where the biggest companies will end up being fine, but the people trying to do their own thing are the ones to be locked out.

these don’t work for any longer than a couple of days, at most.

Is this not just adversarial training/generation, but instead of using it to improve the model they just allow it to mess it up? Sorry, blanking on the exact term. My understanding was that some GANs are specifically trained on stuff like this to improve their abilites to differentiate.

Pretty much

Its on the same path as GAN but there is no adversarial network feedback - Nothing telling the generative ai it is generating bad data

Seems like GAN without the benefits for training models (which is what they wanted it seems. To mess with the training data)

I dont see how this becomes permanent since the models are already trained. Maybe if the technique becomes easy for artists to apply to their digital works and makes it into the training data for the next models

This is one of the dumbest things I’ve ever seen.

Anyone who thinks this is going to work doesn’t understand the concept of signal to noise.

Let’s say you are an artist who draws cats. And you are super worried big tech is going to be able to use your images to teach AI what a cat looks like. So you instead use this to pixel mangle it to bias towards looking like a lizard.

Over there is another artist who also draws cats and is worried about AI. So they use this tool to make cats bias towards looking like horses.

All that bias data taken across thousands of pictures of cats ends up becoming indistinguishable from noise. There’s no more hidden bias signal.

The only way this would work is if the majority of all images in the training data of object A all had hidden bias towards object B (as were the very artificial conditions used in the paper).

This compounds by multiple axes for what you’d want to bias. If you draw fantasy cats, are you only biasing away from cats to dogs? Or are you also going to try to bias against fantasy to pointillism? You can always bias towards pointillism dogs, but now your poisoning is less effective combined with a cubist cat artist biasing towards anime dogs.

As you dilute the bias data by trying to cover multiple aspects that can be learned from your images by AI, you further plummet the signal into noise such that even if there was collective agreement on how to bias each individual axis, it’d be effectively worthless in a large and diverse training set.

This is dumb.

Wanna bet this can be undone in 2 seconds by running an automatic script with basic image manipulation?

AI is here to stay – sure, it sucks to get plagiarized, but there are things artists can do which AI isn’t yet good at. Focus on that, instead of wasting time and energy on paliative solutions.

The last time this popped up was months ago on reddit, and the tool they came up with did something that could be reversed as a batch job using any image manipulator. Which means somebody will write a Stable Diffusion plug-in to fix these images.

Can you explain what the chart means? It seems like it’s supposed to show that it will degrade the output of the models when the number of poisoned samples increases, however it shows a different subject above than below. Does it morph the subject into another concept?

👆 updated

The problem is that the chart is shit. There’s a prompt on the top and then text on the bottom that looks identical to the prompt, but is actually just what the top prompt was poisoned to look like after 100 or 300 samples.

If users have to read a paragraph of text to understand a chart, the chart is shit.

A less salty way to put it would be that the chart is missing two labels: “Original prompt” and “Poisoned prompt”.

The second isn’t even a prompt. I can’t fault you for getting it wrong though, because the chart is so shit!

Not very clear indeed. Each column is a determinate image who is been poisoned and as the lvl of poisoning increase the generated images degrade and turn in something completely different.

Im just gonna be direct. If you cannot understand that chart you severely lack understanding of context.

If you just look at 3 pictures in one row and read the text you should easily be able to understand what the chart is about… That’s like 10 year old logical thinking, if not even younger.

deleted by creator

deleted by creator

Unrelated to much in the way of the article, but the middle tier of anime poisoned with cubism actually looks sorta cool

This fight is over.

If this is all artists brought to the table, it wasn’t even a fight. SD is trained on vast data sets, this little effort won’t be but a drop in the ocean.

More than that - there is no need for new inputs. Massive datasets exist independently. I’ve got one just from a long-term habit of saving images. And my big fat pile of JPGs doesn’t matter, because these models are already out there, in the wild, with communities built on screwing around with them.

The horse left the barn a year ago. It is already too late to stop this. We can bicker about moral and legal rights surrounding published content, but any suggestion of un-inventing this technology is a misguided fantasy.

There is no “if.” This fight is over.

Wasn’t there already a tool like this called Glaze?

This is pretty much Glaze 2. It just intentionally poisons the data set with specific targets so model is more fucked. Originally it was just noise being put in and ultimately a image that had been glazed would just get tossed. With this, the image will actually fuck up the resulting model of there is enough poisoned data included.

Probably, I’m not an expert obviously.

I absolutely love this. I’m not even an artist, but I’m giddy over this.

Don’t be too gidy, it won’t work. SD is already trained on poisoned datasets to help it differentiate poorly generated images. We call it “adversarial training”. If this was gonna stop us from making AI artwork, , it already would have.

Begun, the AI wars have.

The idea has some merit but it’s harder to implement than it looks like. Model-based image generation is heavily biased towards typical values, so you’d need a lot of poison to do it. And that poison would need to be consistent - it doesn’t work if you tell the model now that cats are dogs and then that ferrets are dogs, you need to pick one.

I’m rather entertained by the amount of fallacies and assumptions ITT though. I get that you guys are excited with model-based image gen; frankly, I’m the same when it comes to text gen. But those two things won’t help, learn the difference between “X is true” and “I want X to be true”.

Meanwhile with AI videos (more in the Aibient channel). Intoxicate AI LOL, it made it as a feature