AMD’s new CPU hits 132fps in Fortnite without a graphics card::Also get 49fps in BG3, 119fps in CS2, and 41fps in Cyberpunk 2077 using the new AMD Ryzen 8700G, all without the need for an extra CPU cooler.

I have routinely been impressed with AMD integrated graphics. My last laptop I specifically went for one as it meant I didn’t need a dedicated gpu for it which adds significant weight, cost, and power draw.

It isn’t my main gaming rig of course; I have had no complaints.

Same. I got a cheap Ryzen laptop a few years back and put Linux on it last year, and I’ve been shocked by how well it can play some games. I just recently got Disgaea 7 (mostly to play on Steam Deck) and it’s so well optimized that I get steady 60fps, at full resolution, on my shitty integrated graphics.

I have a Lenovo ultralight with a 7730U mobile chip in it, which is a pretty mid cpu… happily plays minecraft at a full 60fps while using like 10W on the package. I can play Minecraft on battery for like 4 hours. It’s nuts.

AMD does the right thing and uses their full graphics uArch CU’s for the iGPU on a new die, instead of trying to cram some poorly designed iGPU inside the CPU package like Intel does.

I was sold on AMD once I got my Steamdeck.

same here. or at least i finally recognized their potential. but it’s not just the performance, it’s the power efficiency too!

Everything I see about AMD makes me like them more than Intel or Nvidia (for CPU and GPU respectively). You can’t even use an Nvidia card with Linux without running into serious issues.

I mean they make the chips in PS5 and xbox too.

deleted by creator

AMD’s integrated GPUs have been getting really good lately. I’m impressed at what they are capable of with gaming handhelds and it only makes sense to put the same extra GPU power into desktop APUs. This hopefully will lead to true gaming laptops that don’t require power hungry discrete GPUs and workarounds/render offloading for hybrid graphics. That said, to truly be a gaming laptop replacement I want to see a solid 60fps minimum at at least 1080p, but the fact that we’re seeing numbers close to this is impressive nonetheless.

I hope red and blue both find success in this segment. Ideally the strengthened APU share of the market exerts pressure on publishers to properly optimize their games instead of cynically offloading the compute cost onto players.

Hell yeah, I want EVERYONE to make dope ass shit. I’ve made machines with both sides, and I hate tribal…ness. My current machine is a 9900k that’s getting to be… five years old?! I’d make an AMD machine today if I needed a new machine. AMD/Intel rivalry is so good for us all. Intel slacked so hard after the 9000-series. I hope they come back.

Intel has slacked hard since the 2000-series. One shitty 4 core release after another, until AMD kicked things into gear with Ryzen.

And during that time you couldn’t buy Intel due to security flaws (Meltdown, Spectre, …).

Even now they are slacking, just look at the power consumption. The way they currently produce CPUs isn’t sustainable (AMD pays way less per chip with the chiplet design and is far more flexible).

Ayyyy the 9900k was best in class when it was released.

Otherwise I fully absolutely agree with you.

I only went Intel because the benchmarks were amazing.

In fairness, computers have aged a lot better than they did 20 years ago, in the far-off year of 2000.

My computer is a desktop from 2013 or so (With an i7-4770) , and other than some upgrades to the RAM (8 -> 16 GB) and the graphics (GT640 -> RX570), it’s still fairly solid, and will run most things fairly decently.

By comparison, trying to use a computer from 2005 in 2015 was a much tougher ask, since it struggled a lot more with even just bare Windows 10.

My guest machine is a 4670k/16GB/1070! It still runs most games perfectly. Amazing processor series.

Edit: my Crysis machine I built when it came out was pretty rough after like three years. Athlon 64x2 4400+, 4GB, 2x7900GT SLI. It’s insane how much longer computers last now. SSDs help a tonnnnn

Common W for AMD

deleted by creator

The good news is, Framework is shipping with AMD CPUs now. :)

Currently 7th gen Ryzens, not sure when the 8th gens become available.

deleted by creator

Amen!

That’s not how this works

What do you mean?

Laptops don’t have the same amount of cooling as desktop computers, so the same CPU in a laptop won’t give you the same performance as in a desktop computer because of thermal throttling.

Maybe not the exact performance. But I’m pretty sure the main use for integrated graphics is with laptops and other form factor PCs.

Oh, oh ok I thought one of the new Threadrippers is so powerful that the CPU can do all those graphics in Software.

It’s gonna take decades to be able to render 1080p CP2077 at an acceptable frame rate with just software rendering.

It’s all software, even the stuff on the graphics cards. Those are the rasterisers, shaders and so on. In fact the graphics cards are extremely good at running these simple (relatively) programs in an absolutely staggering number of threads at the same time, and this has been taken advantage of by both bitcoin mining and also neural net algorithms like GPT and Llama.

It’s a shame you’re being downvoted; you’re not wrong. Fixed-function pipelines haven’t been a thing for a long time, and shaders are software.

I still wouldn’t expect a threadripper to pull off software rendering a modern game like Cyberpunk, though. Graphics cards have a ton of dedicated hardware for things like texture decoding or ray tracing, and CPUs would need to waste even more cycles to do those in software.

For people like me who game once a month, and mostly stupid little game, this is great news. I bet many people could use that, it would reduce demand for graphic card and allow those who want them to buy cheaper.

Only downside if integrated graphics becomes a thing is that you can’t upgrade if the next gen needs a different motherboard. Pretty easy to swap from a 2080 to a 3080.

Integrated graphics is already a thing. Intel iGPU has over 60% market share. This is really competing with Intel and low-end discrete GPUs. Nice to have the option!

Yeah, I know integrated graphics is a thing. And that’s been fine for running a web browser, watching videos, or whatever other low-demand graphical application was needed for office work. Now they’re holding it up against gaming, which typically places large demands on graphical processing power.

The only reason I brought up what I did is because it’s an if… if people start looking at CPU integrated graphics as an alternative to expensive GPUs it makes an upgrade path more costly vs a short term savings of avoiding a good GPU purchase.

Again, if one’s gaming consists of games that aren’t high demand like Fortnite, then upgrades and performance probably aren’t a concern for the user. One could still end up buying a GPU and adding it to the system for more power assuming that the PSU has enough power and case has room.

For a slightly different perspective, I will not game on anything other than a Steamdeck. So, this is kind perfect for me. But, I am a long hauler with hardware so I typically upgrade everything all at once anyway.

AMD has been pretty good about this though, AM4 lasted 2016-2022. Compare to Intel changing the socket every 1-2 years, it seems.

Actually AMD is still releasing new AM4 CPUs now. 5700x3D was just announced.

Oh, now that sounds like something I might like

I don’t have the fastest RAM out there, so whenever I upgrade from my 1600, I want an X3D variant to help with that

There’s a 5600x3D s well.

I think I’m gonna get one of the higher end models, since it’ll be the last possible upgrade I can do on my motherboard.

So 5700X3D or 5800X3D, depending on what the prices look like whenever I’m gonna be in the market for them. And then I’ll be set for a looong while. Well, an appropriately fast GPU would be nice to go along with it, but you know.

But it’s pretty cool that they made a 5600 variant too. Might as well use the chips they evidently still have left over

Do it!

That’s true but I’m excited about the future of laptops. Some of the specs are getting really impressive while keeping low power draw. I’m currently jealous of what Apple has accomplished with literal all day battery life in a 14inch laptop. I’m hopeful some of the AMD chips will get us there in other hardware.

Could you not just slot in a dedicated video card if you needed one, keeping the integrated as a backup?

Yeah, maybe. I commented on that elsewhere here. If we follow a possible path for IG - the elimination of a large GPU could result in the computer being sold with a smaller case and lower-power GPU. Why would you need a full tower when you can have a more compact PC with a sleek NVMe/SSD and a smaller motherboard form factor? Now there’s no room to cram a 3080 in the box and no power to drive it.

Again, someone depending on CPU IG to play Fortnite probably isn’t gonna be looking for upgrade paths. this is just an observation of a limitation imposed on users should CPU IG become more prominent. All hypothetical at this point.

Or y’know, upgrade the case at the same time.

Or even build the computer yourself. Outside of the graphics card shortage a couple of years back, it’s usually been cheaper to source parts yourself than pay an OEM for a prebuilt machine.

A small side note: If you buy a Dell/Alienware machine, you’re never upgrading just the case. The front panel IO is part of the motherboard, and the power supply is some proprietary crap. If you replace the case, you need to replace the motherboard, which also requires you to replace the power supply. At that point, you’ve replaced half the computer.

Same thing with HP. Their “Pavillion” series of Towers contains a proprietary motherboard and power supply. Also, on the model a friend of mine had, the CPU was AMD, but the cooler scewed on top was designed for intel-purposed boards, so it looked kinda frankensteined.

So in essence, it’s the same with HP.

And the shared RAM. Games like Star Trek Fleet Command will crash your computer by messing with that/memory leaks galore. Far less crashy with a dedicated GPU. How many other games interact poorly with integrated GPUs?

AMD keeps the same sockets for ages. I was able to upgrade a 5 year old Ryzen 5 2600G to a 5600G last month. Can’t do that with Intel in general.

or it may end up making for a push for longer lifetimes for motherboards

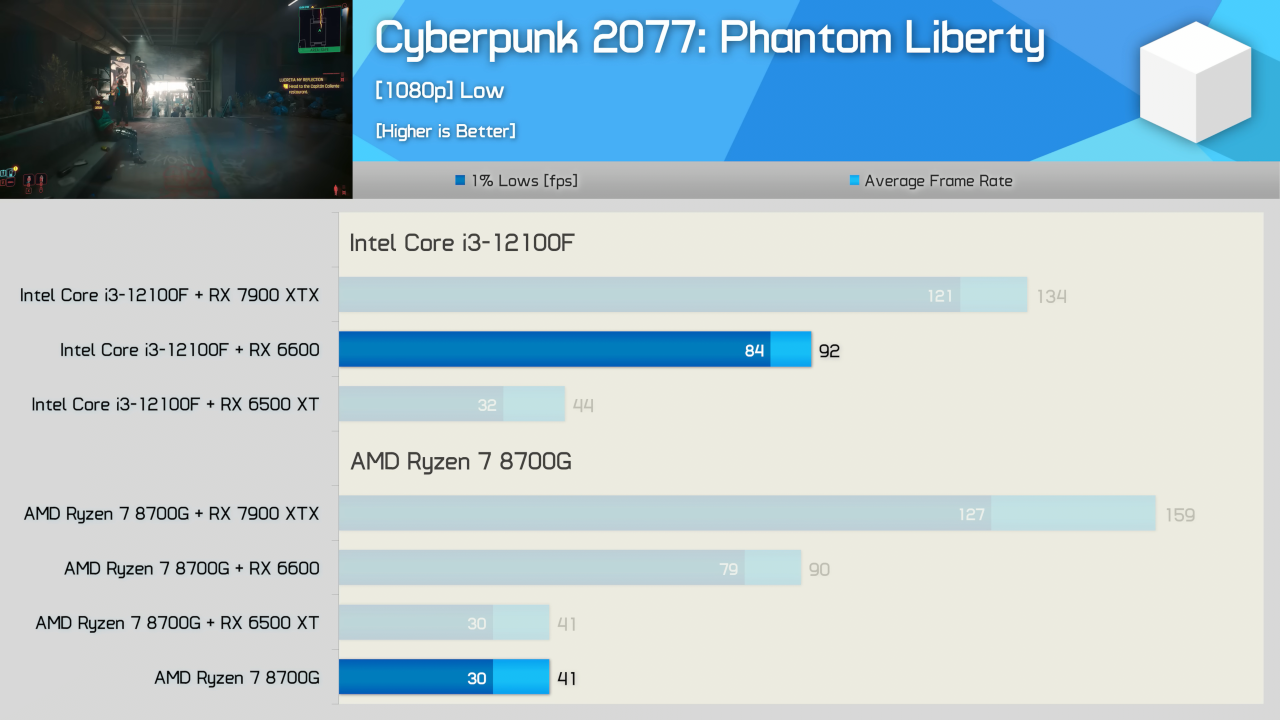

Mind you that it can get these frame rates at the low setting. While this is pretty damn impressive for a APU, it’s still a very niche market type of APU at this point and I don’t see this getting all that much traction myself.

I think the opposite is true. Discrete graphics cards are on the way out, SoCs are the future. There are just too many disadvantages to having a discrete GPU and CPU each with it’s own RAM. We’ll see SoCs catch up and eventually overtake PCs with discrete components. Especially with the growth of AI applications.

People will be building dedicated AI PCs.

They may build dedicated PCs for training, but those models will be used everywhere. All computers will need to have hardware capable of fast inference on large models.

I agree, especially with the prices of graphics card being what they are. The 8700G can also fit in a significantly smaller case.

Unified memory is also huge for performance of AI tasks. Especially with more specialized accelerators being integrated into SoCs. CPU, GPU, Neural Engine, Video encoder/decoders, they can all access the same RAM with zero overhead. You can decode a video, have the GPU preprocess the image, then feed it to the neural engine for whatever kind of ML task, not limited by the low bandwidth of the PCIe bus or any latency due to copying data back and forth.

My predictions: Nvidia is going to focus more and more on the high-end AI market with dedicated AI hardware while losing interest in the consumer market. AMD already has APUs, they will do the next logical step and move towards full SoCs. Apple is already in that market, and seems to be getting serious about their GPUs, I expect big improvement there in the coming years. No clue what Intel is up to though.

$US330 for the top 8700G APU with12 RDNA 3 compute units (compare to 32 RDNA 3 CUs in the Radeon RX7600). And it only draws 88W at peak load and can be passively cooled (or overclocked).

$US230 for the 8600G with 8 RDNA 3 CUs. Falls about 10-15% short of 8700G performance in games, but a much bigger spread in CPU (Tom’s Hardware benchmarks) so I’m pretty meh on that one.

Given the higher costs for AM5 boards and DDR5 RAM, you could spend about the same or $100-200 more than an 8700G build you could combine a cheaper CPU and better GPU and get way more bang for your buck. But I see the 8700G being an solid option for gamers on a budget, or parents wanting to build younger kids their first cheap-but-effective PC.

I also see this as a lazy mans solution to building small form factor mini-ITX Home Theatre PCs that run silent and don’t need a separate GPU to receive 4K live streams. I’m exactly in this boat right now where I literally don’t wanna fiddle with cramming a GPU into some tiny box, but also don’t want some piece of crap iGPU in case I use the HTPC for some light gaming from time to time.

itll be a great upgrade for these little nuc like things , thin laptops, and steamdeck competitors

That’s pretty damn impressive. AMD is changing the game!

Meh. It’s also a $330 chip…

For that price you can get a 12th gen i3/RX6600 combination which will obliterate this thing in gaming performance.

Your i3 has half the cores. Spending more on GPU and less on CPU gives better fps, news at 11.

So what’s the point of this thing then?

If you just want 8 cores for productivity and basic graphics, you’re better off getting a Ryzen 7 7700, which is not gimped by half the cache and less than half the PCIe bandwith and for gaming, even the shittiest discrete GPUs of the current generation will beat it if you give it a half decent CPU.

This thing seems to straddle a weird position between gaming and productivity, where it can’t do either really well. At that pricepoint, I struggle to see why anyone would want it.

It’s like that old adage: there are no bad CPUs only bad prices.

which is not gimped by half the cache and less than half the PCIe bandwith

Half L3, yes. 24 vs. 16 (available) PCIe lanes that’s not half, still enough for two SSDs and a GPU, if you actually want IO buy a threadripper. The 8700G has quite a bit more baseclock, 7700 boosts higher but you can forget about that number with all-core loads. About 9 times raw iGPU TFLOPs.

Oh, those TFLOPs. 4.5 vs. my RX 5500’s 5 (both in f32), yet in gaming performance mine pulls significantly ahead, must be memory bandwidth. Light inference workloads? VRAM certainly won’t be an issue just add more sticks. Those TFLOPS will also kill BLAS workloads dead so scientific computing is an option.

Can’t find proper numbers right now but the 7700 should have a total of about half a TFLOP CPU and half a TFLOP GPU.

So, long story short: When you’re an university and a prof says “I need a desktop to run R”, that’s the CPU you want.

24 vs. 16 (available) PCIe lanes that’s not half

24x PCIe 5.0 vs 16x PCIe 4.0

So 8 lanes less and each lane has half the bandwith = less than half the PCIe bandwidth.

But isn’t the point of this post being that the CPU still runs games okay without a dedicated video card?

It’s hardly a useful comparison to compare the CPU on its own against a Video Card + CPU.

If it was the i3 on its own, that might be a different story.

It’s hardly a useful comparison to compare the CPU on its own against a Video Card + CPU.

It is a useful comparison if the latter combination is the same price

Is this a pun?

So will this be a HTPC king? Kind of skimped on the temps in the article. I assume HWU goes over it and will watch it soon.

The page on AMD’s website says 65W TDP so much the same as any other desktop CPU. Might be a bit much for HTPC depending on cooling? I dunno

I’m interested in this for my TrueNAS server to offload Plex transcoding. I’m about due for an upgrade, the current hardware is about 10 years old.

From my understanding, transcode quality is a concern. I’ve unfortunately read AMD’s implementation just isn’t very good. That one is better off going Intel particularly from the last few years.

Jellyfin’s docs specifically talk about the issue.

Intel’s transcoding is also faster in the same generation.

Been debating which way to go for my next rebuild as I’m over due myself.

I mostly transcode overnight using tdarr to a format that’s compatible with most of my devices, but for on-the-fly it’s nice to have a performant hardware option. I was really hoping to get away from Intel for the next build though

What services use the graphics card and are fine with the low end?

The playstation 5 also does this.

deleted by creator

Aaaaand the 7950x3D is not top tier anymore

Back in my day the 7950 was a GPU!

Yelling at clouds

Mine is still running nicely :)