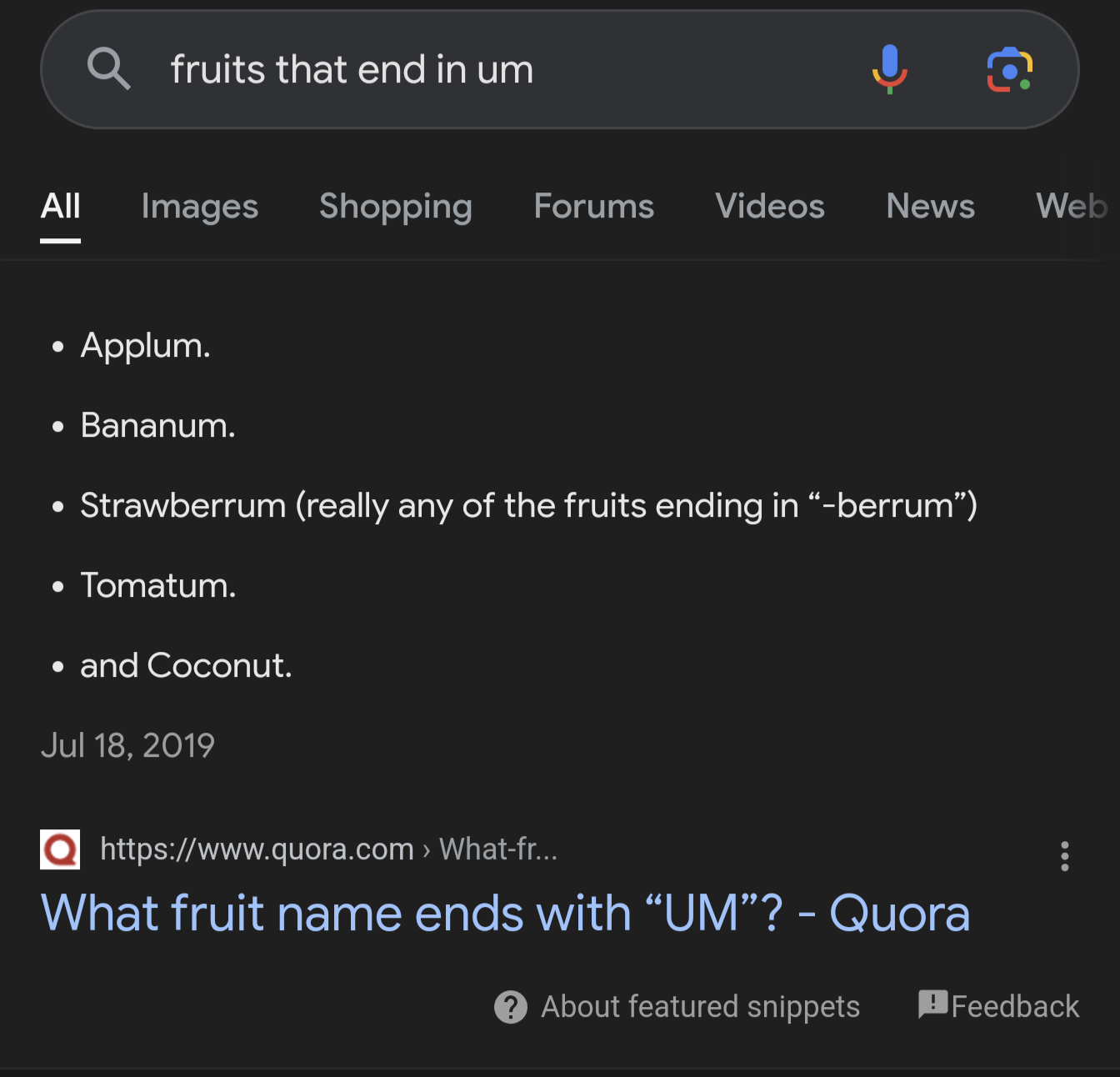

Looks like it’s learned that adding “according to Quora” makes it look more authoritative. Maybe with a few more weeks of training it’ll figure out how to make fake citations of sources that are actually trustworthy.

Just wait until it starts taking stuff from 4chan, twitch, and twitter. Things are going to be come so much more interesting.

Google signing a contract with 4chan for data training is actually so stupid I don’t think it’ll ever happen.

4chan is almost certainly blacklisted from basically everything AI given the sites content and history of intentionally destroying chatbots/earlier 'AI’s.

But at the same time they paid reddit millions to train on “authoritative” posts like that one from “fuckSmith” that suggested to add glue to pizza

Yep and I still barely believe they did so, reddit has a whole lot of stupid.

Its almost as stupid as Musk buying Twitter.

Hooray clown world I guess.

As @Karyoplasma@discuss.tchncs.de pointed out, this is an actual answer on Quora so at least it got that right

I think it’s also a way of shifting the blame.

For some reason I don’t have AI search on my account, but I still get the same answer:

That’s probably a real answer from someone on Quora then

What’s the point in having an AI run the search and present the found answer for you, when you just ran the search yourself and gets the AI finding presented?

As this point AI helpers are just a layer that hides the details from the original search. It’s useless for this. AI is wonderful for lots of stuff, but this just isn’t it. I used to laugh when people used the Google search box to find Google so they could search in Google, but that is exactly what AI is doing for us now.

Plus the insane power consumption for such a marginally useful feature. Especially given that it’s on by default for everyone using google (as I understand)

It’s almost like the feature is not ready but they need to show off to their investors anyway. At the cost of user experience and the environment.

At least with ChatGPT you have to consciously go to their website and use, rather than being the first result of a fucking internet search.

Yes, the search engine AI is a very expensive and shitty filter.

Unfortunately with SEO going nuts these days, it might be necessary to have some kind of spam filter for searching the web just to avoid some of the enshittification created by AI in the first place. Like, the AI goes to Quera and Reddit for answers instead of the marketing links, so at least the answers are less commercial.

Obviously these “human” sources will eventually or are already shittifyed too, with half the posts there also being marketing in one way or the other.

I dislike it, but flooding the web with useless crap may be the key to making some better alternative…

More eyes on your website, means less on other websites, making your adverts more valuable.

And when it doesn’t work, it doesn’t matter, because you run the advertising on the other websites too. Bonus: you can penalise rankings for websites that don’t use your advertising network.

Was having a related conversation with an employee this morning (I manage a software engineering organization). He asked an LLM how to separate the parts of a date in Excel, and got a pretty good explanation of how do it with the text to columns wizard, and also how to use a formula to get each part. He was happy because he felt it would have taken him much longer to figure it out himself.

I was saying I thought that was a good use of an LLM - it’s going to give a tailored answer - but my worry is that people will do less scrubbing of an answer coming from an AI than one they saw on a forum. I said we should think of it like a tailored Google search.

For comparison, I googled “Excel formula separate parts of a date” and one of the top results was a forum discussion that had the exact solutions the LLM gave, using the same examples. On the one hand, to get it from the forum you had to wade through all the wrong answers and discussions. On the other hand, that discussion puts the answer given in the context of a bunch of others that are off the mark, and I think make people less likely to assume it’s correct.

In any case, it’s still just synthesizing from or regurgitating training data.

I think LLMs are better for more fluffy stuff, like writing speeches etc.

Excel solutions are often very specific. A vague question like separating a date can be solved in many ways, using a variety of formulas, the text-to-column wizard, VBA, import queries or even just formatting, all depending on what you really need, what the input is and what locality is used and other things.

The text-to-column method is great, because it transforms whatever the input is into a date type, making it possible to treat it as and make calculations as an actual date. It’s not always the right solution though, for instance if the input is ambiguous.

It’s fine that he learned to use this method, but I wonder what he’d ask the LMM in a case where it isn’t the right solution and what it’ll come up with then. He didn’t actually learn to separate a date from the input. He learned to use the text import wizard.

In my experience it’s preferable to learn these things on a more basic level if only just to be able to search more specifically for the right answer, because there is a specific answer. Having a language model run through a bunch of solutions and presenting the most popular one might just be a waste of time and leading you into a wild goose chase.

You might have missed where I said it explained both the text to columns wizard and a formula. He used the formula, which is what he was looking for. He’s a top notch software developer, he just doesn’t use Excel much.

But I agree with your broader point. I keep having to remind people that the “LM” part is for “language model.” It’s not figuring anything out, it’s distilling what an answer should look like. A great example is to ask one for a mathematical proof that isn’t commonly found online - maybe something novel. In all likelihood, it’s going to give you one, and it will probably look like the right kind of stuff, but it will also probably be wrong. It doesn’t know math (it doesn’t know anything), it just has a model of what a response should look like.

That being said, they’re pretty good for a number of things. One great example is lesson plans. From what I understand, most teachers now give an LLM the coursework and ask it to generate a lesson plan. Apparently they do an excellent job and save many hours of work. Anything that involves summarizing information is good, especially as that constrains the training data.

Most likely an answer written by another AI directly on Quora then

5 years ago?

GPT has been around for a long ass time.

I know. It turned me into a newt and cursed my crops.

So many fruits in the berrum family, can’t believe they even had to google that question…

I love schnozzberrum

“If it’s on the internet it must be true” implemented in a billion dollar project.

Not sure what would frighten me more: the fact that this is trainings data or if it was hallucinated

Neither, in this case it’s an accurate summary of one of the results, which happens to be a shitpost on Quara. See, LLM search results can work as intended and authoritatively repeat search results with zero critical analysis!

Pretty sure AI will start telling us “You should not believe everything you see on the internet as told by Abraham Lincoln”

Can’t even rly blame the AI at that point

Sure we can. If it gives you bad information because it can’t differentiate between a joke a good information…well, seems like the blame falls exactly at the feet of the AI.

Should an LLM try to distinguish satire? Half of lemmy users can’t even do that

Do you just take what people say on here as fact? That’s the problem, people are taking LLM results as fact.

It should if you are gonna feed it satire to learn from

Sarcasm detection is a very hard problem in NLP to be fair

If it’s being used to give the definite answer of a search, so it should. If it can, than it shouldn’t be used for that

Wow they really did it.

They put the um in the coconut and shake it all up

It would appear the coconut is the only one they didn’t put the um in though

Have an angry (and admiring) upvote.

There goes my making shit up job.

Now LLMs are even taking the jobs of professional trolls! What’s gonna be next? The scambots loosing their jobs to LLMs?!

the scammers are already using ‘ai’

Its already happening! We’re all gonna die!

I’ll allow that one because it said “According to Quora” so you knew to ignore it.

Looks like AI is lots and lots of “artificial” and close to nothing in the area of “intelligence”.

as real as artificial cheese.

AI is just very creative, ok?

Coconut um!

“AI” has always been like this… It’s not sentient or rational… It’s not thinking it’s just averaging language… It’s not really AI, it’s a language model.

Language models are just one type of AI, so it’s overly reductive to say thay all AI is like this. The computer players in Mario Kart are also AI, for example.

any computer is AI

No it isn’t

Calculators, books, dna, wildfires and matter too. Expand a definition enough and it becomes less meaningful.

…and “artificial” as well as “intelligence” weren’t all that well defined to begin with.

According to this shit I just made up, 95% of all quotes come from Benjamin Franklin. Using equally loose definitions, my lies are AI.

See also: Alternative Facts

I for one am enjoying this AI thing at Google. I haven’t had that many laughs from just searching for things.

I think the names end with um in Latin.

https://www.wordhippo.com/what-is/the/latin-word-for-d0be2dc421be4fcd0172e5afceea3970e2f3d940.html

Everything ends with um in Latin!

Hoc casu non est

So why does everything end with a vowel n modern Italian?

Latinum. Fix that for you.

I love how it just gave up on coconut

CoconutAlways trust user input. Surely the AI will figure it out.