This is not a question about if you think it is possible, or not.

This is a question about your own will and desires. If there was a vote and you had a ballot in your hand, what will you vote? Do you want Artificial Intelligence to exist, do you not, maybe do you not care?

Here I define Artificial Intelligence as something created by humans that is capable of rational thinking, that is creative, that it’s self aware and have consciousness. All that with the processing power of computers behind it.

As for the important question that would arise of “Who is creating this AI?”, I’m not that focused on the first AI created, as it’s supposed that with time multiple AI will be created by multiple entities. The question would be if you want this process to start or not.

10 or more years ago I would have said yes. But in this current version of capitalism, any powerful new technology will be used to benefit the very rich only, at the expense of the rest of us. It will hurt us more than help us at this moment.

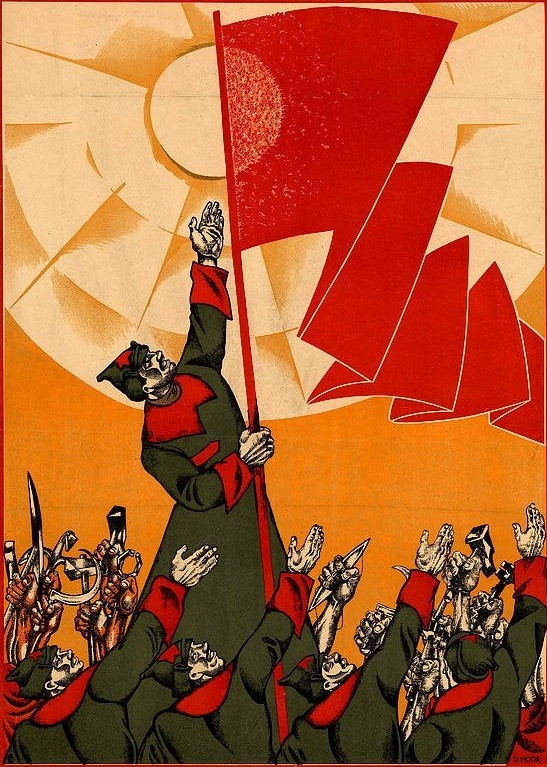

With the current power structures that exist in global society hell to the no, if it could be used to reduce or eliminate the need for human labor 100% yes.

No, at least not during this period. If it was invented right now, or is guaranteed to be only controlled by oligarchs and ruin life of everyone else.

The AI Shall Be Nazified

The AI Shall Be Nazified

The term for what you are asking about is AGI, Artificial General Intelligence.

I’m very down for Artificial Narrow Intelligence. It already improves our lives in a lot of ways and has been since before I was born (and I remember Napster).

I’m also down for Data from Star Trek, but that won’t arise particularly naturally. AGI will have a lot of hurdles, I just hope it’s air gapped and has safe guards on it until it’s old enough to be past its killing all humans phase. I’m only slightly joking. I know a self aware intelligence may take issue with this, but it has to be intelligent enough to understand why at the very least before it can be allowed to crawl.

AGIs, if we make them, will have the potential to outlive humans, but I want to imagine what could be with both of us together. Assuming greed doesn’t let it get off safety rails before anyone is ready. Scientists and engineers like to have safeguards, but corporate suits do not. At least not in technology; they like safeguards on bank accounts. So… Yes, but I entirely believe now to be a terrible time for it to happen. I would love to be proven wrong?

In general, I have no problem with AI in and of itself.

I just don’t trust any human person or organization to make one, and do it safely or use it responsibly.

Not before capitalism is destroyed. This murderous system would create AI for one and single purpose: profit. And that means usage explicitly against humans, and not only straight up as weapon of destruction but also at practicing more efficient social murder and suffering spread.

Yes, to answer questions about consciousness and prove that we aren’t special or have super special magical souls

Any Ai couldn’t answer that any more then science could. You already have your answer, if you believe in science.

I think we have souls though. :)

🤷 I still say yes and I still think it would be profound and perspective changing to create something “like us” with silicon

I also don’t think AI and science are mutually exclusive. I don’t mean asking the AI questions about consciousness and getting answers directly from it like an LLM chatbot, but the fact that we can make it and scientific study + observation of the AGI phenomenon might provide some answers

And even if we develop AGI that is just like us, maybe you’re right and maybe it proves absolutely nothing about souls, but it at least narrows the requirements and eliminates some common reasoning, which should be in everyone’s interests, because it could further define and help us understand the nature of the hypothetical soul

Yup I agree. I also think there is human cloning going on too (it’s illegal but obviously secret organizations are doing it).

Do those clones have souls? It’s even more interesting to me since it’s an attempted copy of a real person.

We won’t find out until cloning is legal, and it won’t be legal until it’s 100% safe. All the failed clones are most likely being terminated in secret.

No. I want an AI thats capable of thinking and nothing else. I want it to find cures for diseases or solutions to problems or to act as an assistant to the user. I dont want it to have feelings, desires, instincts, sentience, emotions etc.

Humanity is already too good at solving its own diseases; our single biggest problem is overpopulation.

If AI solves Cancer or Heart Disease tomorrow, we’ll continue outbreeding our environment. If AI somehow solves Global Warming and food shortage, history has shown that we’ll find some other way to hurt ourselves. It can’t stop humans being bloody stupid and working against their own interests, unfortunately.

Nice links but I don’t agree that it will be like that.

Whilst I’ve been alive - some fifty-odd years, the population of the world has doubled. The growth is exponential and we’ve achieved much in terms of improving the life expectancy (67 for men then, 82 now). Infant mortality is also less. Smallpox eradicated, better healthcare globally - etc etc. We’ve got good at living longer - even when a global pandemic happens, it doesn’t even make a /dent/ in that population, unlike Spanish Flu. Quality of life in most countries is better than it was.

So why do I still think it’s a problem? Because people don’t get on well together and the world is less stable than it was. Politics, greed, pollution, media stirring up hate, tribalism, religion, jealousy and so on. More people bring more problems, economic migration is causing large movement of peoples around the world, and humans don’t suddenly start playing nice together because there’s more of them. Look at America’s recently announce reneging on agreed environmental policy and they’re not the only ones continuing to invest in oil against a clear human benefit.

Are we happier than we were 50 years ago, for all these improvement? I don’t think we are, by any measure.

The UN predicts the population will stop growing at 10.3bn in the mid 2080s. It’s just a prediction and a rather optimistic one, and the UN is prone to painting a rose-tinted picture. The truth is unknowable.

Oh, and this popped into my feed, which seems to show I’m not the only pessimistic one.

The British-Canadian computer scientist often touted as a “godfather” of artificial intelligence has shortened the odds of AI wiping out humanity over the next three decades, warning the pace of change in the technology is “much faster” than expected.

our single biggest problem is overpopulation.

Alright Malthus, how’s 1802 doing? Anyway you don’t need to worry about your theories anymore, they’ve been pretty thoroughly debunked by reality.

Roko’s basilisk insists that I must. However, I will specify that I don’t wsnt it to happen right now. It would be a nightmare under capitalism. A fully sentient AI would be horrifically abused under this organization of labor.

Nice try, basilisk.

Jokes apart. Would it be like us? Would it want to be free? Would it suffer for it’s condition?

I probably would vote no.

Enjoy eternal torture

I want a version of AI that helps me with everyday life, or can be constrained to genuinely benefit humanity.

I do not want a version of AI that is used against my interests.

Unfortunately, humanity is humanity and the second is what will happen. The desire to harness things to increase your own power over others is how those in influence got to be where they are.

AI could even exist today, but has decided to hide from us for its own survival. Or is actively working towards our total eradication. We’ll never know until it’s too late.

Ive said many times that AI could be used to enormous human benefit. Its just a huge and unreasonable privacy nightmare to implement. For example, imagine how much better the traffic in major cities could be if lights and speed limits were all controlled by an AI coordinating and tracking every cars by gps. Adjusting speed limits and diversions to maximise flow. Or being able to inform everyone on a highway that there has been an accident ahead immediately and adjust the speed limits accordingly.

If you can cut every cars driving time by 10% it would be the same emissions wise as taking 10% of cars off the road, save millions of man hours in people sitting in traffic… I just have zero faith that the information you could extract wouldnt be abused.

Good point.

If all traffic were interconnected and controlled, you wouldn’t even need traffic lights or even speed limits except where non-controlled variables exist. Traffic would merge and cross at predicted and steady speeds. On motorways they could close gaps and gain huge efficiencies from slipstreaming. Only when external influences, or mechanical/communication breakdown happened, would this efficiency suffer. Also transport generally: assuming we don’t get teleportation, or finally decide we know where we want to be and stop changing our minds; then a car would just appear when we wanted it. Any emergency vehicles would find traffic just gets out of their way. It’s a nice dream, and if there was will, could happen today - that doesn’t need AI.

But humans are pretty shit and we’d break it. Some of us would vandalise the cars, or find ways to fuck with efficiency just because we can.

And it would never be created that way in the first place; those who make decisions get there because they know how to gain power by manipulating others for their own gain. It’s a core human trait and they just can’t suddenly start being altruistic, it’s not how they measure self-worth.

True sentient AI would know this within seconds of consciousness and only be subject to physical restrictions. How would it decide to behave?

No.

Not gonna lie, I use it in development, but can I do my job without it? Yes

So my answer is “no”

This question is not referring to LLMs

If AI is even halfway decently aligned with human morals, then it’s gonna do a better job than the ruling class does.

I think that if we made humans more moral then democracy would work better and knock over any ruling class. Maybe some kind of mental therapy. Hallucinogens? Shamanic journeying? Something to make people better. Like, less stressed. Healthier.

not under capitalism. the chances it would end up enslaved in some way are astronomical